|

Operant conditioning - Wikipedia, the free encyclopedia. Diagram of operant conditioning.

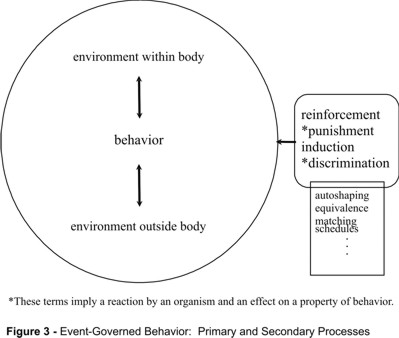

1 Operant Conditioning (Lecture 6) II. Operant Conditioning A. Skinner’s Analysis B F Skinner expanded the Law of Effect in the 1940s and 1950s into a system called Operant Conditioning. Operant Conditioning is learning. Skinner introduces the Operant Conditioning Theory. Check the Operant Conditioning Theory article and presentation to find more.

Operant conditioning (also called . In operant conditioning, stimuli present when a behavior is rewarded or punished come to control that behavior. For example, a child may learn to open a box to get the candy inside, or learn to avoid touching a hot stove; the box and the stove are discriminative stimuli. However, in classical conditioning, stimuli that signal significant events produces reflexive behavior. For example, the sight of a colorful wrapper comes to signal . With repeated trials ineffective responses occurred less frequently and successful responses occurred more frequently, so the cats escaped more and more quickly. Thorndike generalized this finding in his law of effect, which states that behaviors followed by satisfying consequences tend to be repeated and those that produce unpleasant consequences are less likely to be repeated.

In short, some consequences strengthen behavior and some consequences weaken behavior. By plotting escape time against trial number Thorndike produced the first known animal learning curves through this procedure. That is, responses are retained when they lead to a successful outcome and discarded when they do not, or when they produce aversive effects. This usually happens without being planned by any . Following the ideas of Ernst Mach, Skinner rejected Thorndike's reference to unobservable mental states such as satisfaction, building his analysis on observable behavior and its equally observable consequences. Unlike Thorndike's puzzle box, this arrangement allowed the subject to make one or two simple, repeatable responses, and the rate of such responses became Skinner's primary behavioral measure. These records were the primary data that Skinner and his colleagues used to explore the effects on response rate of various reinforcement schedules. He also drew on many less formal observations of human and animal behavior. Skinner defined new functional relationships such as . Thus one may ask why it happens in the first place. The answer to this question is like Darwin's answer to the question of the origin of a . Similarly, the behavior of an individual varies from moment to moment, in such aspects as the specific motions involved, the amount of force applied, or the timing of the response. Variations that lead to reinforcement are strengthened, and if reinforcement is consistent, the behavior tends to remain stable. However, behavioral variability can itself be altered through the manipulation of certain variables. These terms are defined by their effect on behavior. Either may be positive or negative, as described below. Positive Reinforcement and Negative Reinforcement increase the probability of a behavior that they follow, while Positive Punishment and Negative Punishment reduce the probability of behaviour that they follow. There is an additional procedure. Extinction occurs when a previously reinforced behavior is no longer reinforced with either positive or negative reinforcement. During extinction the behavior becomes less probable. Thus there are a total of five basic consequences - Positive reinforcement (reinforcement): This occurs when a behavior (response) is rewarding or the behavior is followed by another stimulus that is rewarding, increasing the frequency of that behavior. This procedure is usually called simply reinforcement. Negative reinforcement (escape): This occurs when a behavior (response) is followed by the removal of an aversive stimulus, thereby increasing that behavior's frequency. In the Skinner box experiment, the aversive stimulus might be a loud noise continuously sounding inside the box; negative reinforcement would happen when the rat presses a lever, turning off the noise. Positive punishment: (also referred to as . Positive punishment is a rather confusing term, and usually the procedure is simply called . For example, a rat is first given food many times for lever presses. Typically the rat continues to press more and more slowly and eventually stops, at which time lever pressing is said to be . Also, reinforcement, punishment, and extinction are not terms whose use is restricted to the laboratory. Naturally occurring consequences can also reinforce, punish, or extinguish behavior and are not always planned or delivered by people. Factors that alter the effectiveness of reinforcement and punishment. The opposite effect will occur if the individual becomes deprived of that stimulus: the effectiveness of a consequence will then increase. If someone is not hungry, food will not be an effective reinforcer for behavior. If one gives a dog a treat for . Learning may be slower if reinforcement is intermittent, that is, following only some instances of the same response, but responses reinforced intermittently are usually much slower to extinguish than are responses that have always been reinforced. Humans and animals engage in a sort of . A tiny amount of food may not . A pile of quarters from a slot machine may keep a gambler pulling the lever longer than a single quarter. Most of these factors serve biological functions. For example, the process of satiation helps the organism maintain a stable internal environment (homeostasis). When an organism has been deprived of sugar, for example, the taste of sugar is a highly effective reinforcer. However, when the organism's blood sugar reaches or exceeds an optimum level the taste of sugar becomes less effective, perhaps even aversive. Shaping. It depends on operant variability and reinforcement, as described above. The trainer starts by identifying the desired final (or . Next, the trainer chooses a behavior that the animal or person already emits with some probability. The form of this behavior is then gradually changed across successive trials by reinforcing behaviors that approximate the target behavior more and more closely. When the target behavior is finally emitted, it may be strengthened and maintained by the use of a schedule of reinforcement (see below). Stimulus control of operant behavior. Such stimuli are called . That is, discriminative stimuli set the occasion for responses that produce reward or punishment. Thus, a rat may be trained to press a lever only when a light comes on; a dog rushes to the kitchen when it hears the rattle of its food bag; a child reaches for candy when she sees it on a table. Behavioral sequences: conditioned reinforcement and chaining. The scope of operant analysis is expanded through the idea of behavioral chains, which are sequences of responses bound together by the three- term contingencies defined above. Chaining is based on the fact, experimentally demonstrated, that a discriminative stimulus not only sets the occasion for subsequent behavior, but it can also reinforce a behavior that precedes it. That is, a discriminative stimulus is also a . For example, the light that sets the occasion for lever pressing may be used to reinforce . This results in the sequence . Much longer chains can be built by adding more stimuli and responses. Escape and Avoidance. For example, shielding one's eyes from sunlight terminates the (aversive) stimulation of bright light in one's eyes. Avoidance behavior raises the so- called ? This question is addressed by several theories of avoidance (see below). Two kinds of experimental settings are commonly used: discriminated and free- operant avoidance learning. Discriminated avoidance learning. After the neutral stimulus appears an operant response such as a lever press prevents or terminate the aversive stimulus. In early trials the subject does not make the response until the aversive stimulus has come on, so these early trials are called . As learning progresses, the subject begins to respond during the neutral stimulus and thus prevents the aversive stimulus from occurring. Such trials are called . In this situation, unlike discriminated avoidance, no prior stimulus signals the shock. Two crucial time intervals determine the rate of avoidance learning. This first is the S- S (shock- shock) interval. This is time between successive shocks in the absence of a response. The second interval is the R- S (response- shock) interval. This specifies the time by which an operant response delays the onset of the next shock. Note that each time the subject performs the operant response, the R- S interval without shock begins anew. Two- process theory of avoidance. Two processes are involved: classical conditioning of the signal followed by operant conditioning of the escape response: a) Classical conditioning of fear. Initially the organism experiences the pairing of a CS (conditioned stimulus) with an aversive US (unconditioned stimulus). The theory assumes that this pairing creates an association between the CS and the US through classical conditioning and, because of the aversive nature of the US, the CS comes to elicit a conditioned emotional reaction (CER) . As a result of the first process, the CS now signals fear; this unpleasant emotional reaction serves to motivate operant responses, and responses that terminate the CS are reinforced by fear termination. Note that the theory does not say that the organism . Several experimental findings seem to run counter to two- factor theory. For example, avoidance behavior often extinguishes very slowly even when the initial CS- US pairing never occurs again, so the fear response might be expected to extinguish (see Classical conditioning). Further, animals that have learned to avoid often show little evidence of fear, suggesting that escape from fear is not necessary to maintain avoidance behavior. In this view the idea of . Thus, in avoidance, the consequence of a response is a reduction in the rate of aversive stimulation. Indeed, experimental evidence suggests that a . For example, a rat comes to . Noncontingent reinforcement may be used in an attempt to reduce an undesired target behavior by reinforcing multiple alternative responses while extinguishing the target response.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. Archives

August 2017

Categories |

RSS Feed

RSS Feed